#7 Deeptech Analysed - Discover OpenAI 3D Modeling: empowering designers with faster, smarter and more accurate solutions

Revolutionise your designs with OpenAI 3D Modeling in just a few minutes

What is going on?

Remember DALL-E, the text-based image generator? Or ChatGPT, the robot capable of holding conversations, answering questions, and producing texts on all sorts of subjects with an almost human style and naturalness?

OpenAI, the American artificial intelligence company has just recently presented a new tool as original and impressive as it is creative.

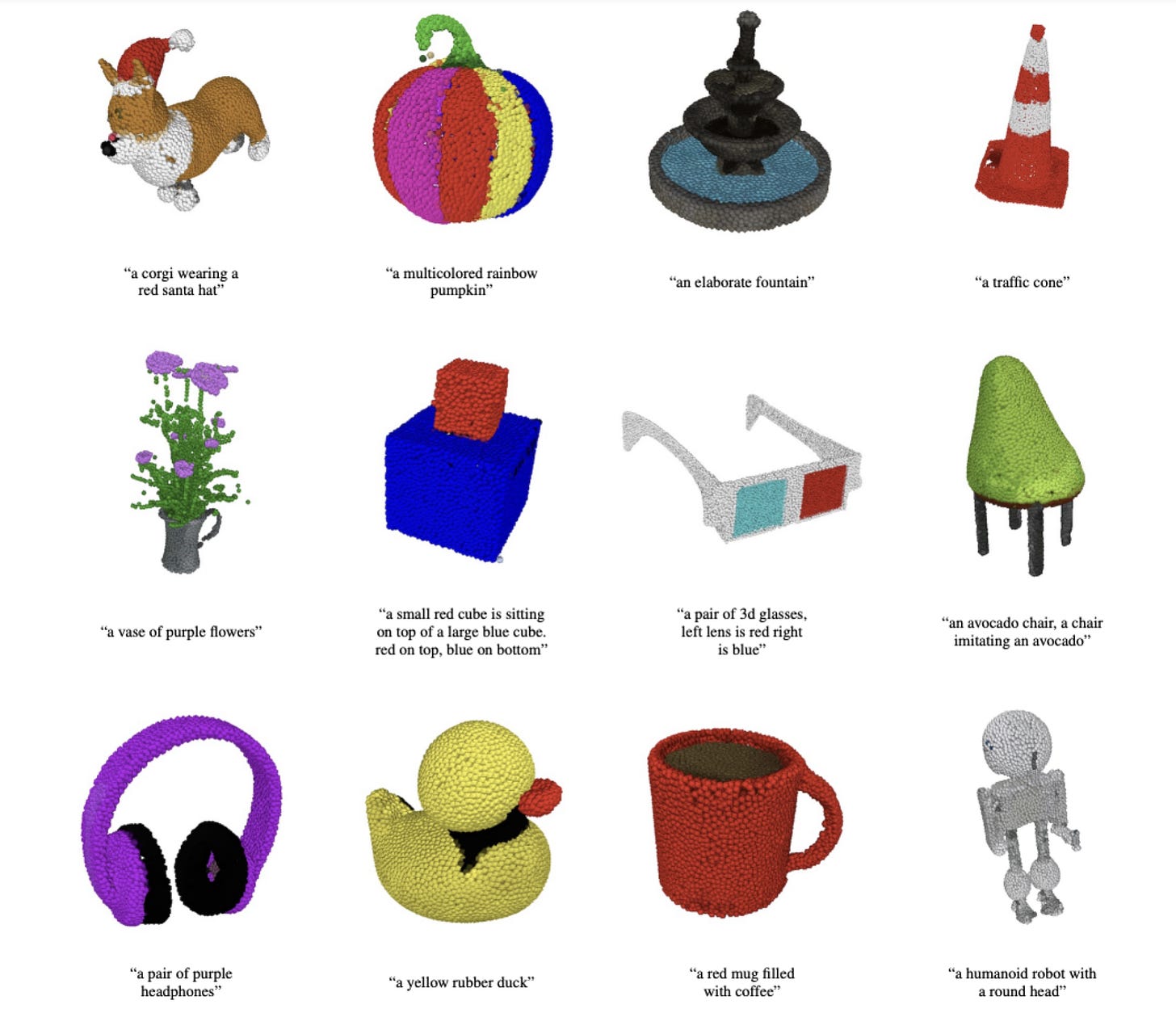

Called Point-E, it aims to generate 3D - three-dimensional - color images using simple textual indications.

What does it mean?

With one sentence, you can produce in a few minutes a 3D point cloud.

It's true that it's not the best software that exists in terms of visual rendering and then don’t expect to have a 3D model like the one used in movies or video games. However, to date, this is by far the fastest 3D generator. Where existing systems like Google DreamFusion usually require several hours and a lot of computing power to generate their images, POINT-E only needs a GPU and a few minutes of work.

The process is quite simple. The user enters a sentence describing the desired object, and the artificial intelligence produces a synthetic view via a point cloud, which gives the overall shape of the model. "Our method first generates a single synthetic view using a text-image diffusion model, then produces a 3D point cloud using a second diffusion model that conditions the generated image" explains OpenAI. To put it more simply, Point-E uses not one but two AIs: one that translates text into image - like DALL-E or Stable Diffusion - and a second that translates image into 3D model.

This is the trick to make 3D generation faster. And, above all, it is very easy to use. This is a nice advance for generative artificial intelligence systems, which are very popular now. The Point-E models and the code are available on the Github repository of OpenAI, and everyone can consult the content of the project since it is open source.

Why does it matter?

💸For markets: The beginnings of a new wave of 3D generation ?

Point-E not a commercially ready project. It's foundational research that may, eventually, lead to on-demand, rapid 3D model creation. It's a bit like chatGPT which is based on the GPT-3 model. With further work, it may make virtual world creation easier and more accessible to those without professional 3D graphics skills. Or perhaps it will help simplify the process of creating 3D printed objects because it supports the creation of point clouds for use in product fabrication.

How much time will we wait before seeing a new wave of software allowing the generation of 3D objects just like what happened with the images with DALLE or Midjourney?

This is definitely a technology that could be applied to many different sectors in the future.

🧑🏿🤝🧑🏻For society: On the road to greater development of 3D printing?

The 3D images generated by Point-E can be used to make real objects with a 3D printer. An average person could generate a whole bunch of objects that he could then use in his daily life. While this offers many possibilities, it can also be used to manufacture dangerous objects like weapons or something similar, which would then become easily accessible to the general public.

So far, we are in the world of research but in a few years, it could become mainstream to search and/or generate a 3D object that we could own in only a few minutes. If we go even further, we could link web 3.0 and generated 3D objects by creating NFTs that will be directly registered on the blockchain and that could even be directly transmitted in virtual worlds that could exist in the near future.

See for example Holoride: turning vehicles into moving theme parks.

🔮What’s next?

As revolutionary as it is, Point-E is far from perfect. Indeed, if point clouds are easy to synthesize, they do not capture well the shape or texture of the object.

To get around this limitation, the AI is trained to convert the point clouds into a mesh - a geometric data structure that represents the vertices, edges, and faces that define the object using a set of polygons.

The AI is not yet fully developed and sometimes misses parts of the 3D model. But as said previously, we are at the beginning of the democratization of this kind of technology, and this could evolve exponentially. We can therefore expect that these programs will also advance much faster than before. A bit like image and text generators. As a reminder, the latter more or less stagnated for years before experiencing a spectacular explosion.

The possibilities offered by Point-E should be of interest to many sectors, as 3D models are widely used in film, television, interior design, architecture and industry - for the creation of vehicles, appliances or structures for example.

As an exemple, imagine if a Point-E program could produce AAA-caliber 3D models from a single sentence from the studio's universe. It would be a revolution in the video game world. And for good reason: this modeling work is exceptionally time-consuming.

By removing this constraint, small game actors could achieve a level of quality comparable to those who do high quality modeling today. At the same time, they could significantly increase the rate of production.

The same can be applied to the movie industry where for some movies, they require a lot of 3D. Think Avatar ? OpenAI hopes its AI "can serve as a starting point for further work in the field of 3D synthesis."

The company isn't alone in this. Earlier this year, Google unveiled DreamFusion, while Epic Games has designed an application that generates a 3D object using photos taken with a smartphone.